Manufacturing robotics: Franka Emika Robotics

Franka Emikas Panda is a sensitive, interconnected, and adaptive robotic arm. The robotic arm is inspired by human agility and sense of touch. With torque sensors in all seven axes, the arm can delicately manipulates objects, and accomplish programmed tasks. The collaborative lightweight robot system is specifically designed to assist humans.

What sets the Franka Emika Panda apart from other single-arm, collaborative robots is its unique training and programming methods. The robot learns movement through physical demonstrations by imitating an expert on the task it is being prepared for. This can be physically demonstrated by a person or another robot. To train the Robot to perform specific actions, the user can manually direct the robotic arm through a sequence of motions while pressing buttons on the arm, for the robot to commit each step to memory. The arm learns the task in only a few minutes and can start repeating the action without the need for complicated software updates or programming. The user can also choose from several pre-designed apps to use the arm for other tasks.

The robot can also learn from the stimulation of 3D artificial environments. This allows a robot to swiftly construct a 3D map of its immediate environment, which includes objects and their semantic labels (for example, a chair vs a table), as well as walls, rooms, people, and other structures. The robot can also use the model to extract necessary information from the 3D map, such as the placement of objects and rooms, as well as the movement of humans along its route. The Franka Emika Robot’s ability to “think” or reason through machine learning makes it aware of its immediate environment. As a result, the cobot is safe around human counterparts.

Industrial automation is frequently used in places where precision is as essential as safety. Examples include packaging and material handling, carrying a heavy load, quality control and inspection, metal fabrication, and repetitive production. Robotics, on the other hand, are frequently found working alongside people on factory production lines.

To avoid the potential to harm from the robotic arm in motion, the Franka Emika Robot has a highly advanced robot manipulator that can sense its environment. The Franka Emika is equipped with a force-sensing control scheme and is designed to work safely alongside people.

The cobot is designed to perform tasks that require direct physical contact in a carefully controlled manner. It achieves its dexterity through a torque-control.

Specifications:

- Degrees of freedom (DOF): 7

- Payload: 3 kg

- Sensitivity: torque sensors in all 7 axes

- Maximum reach: 855 mm

- Repeatability: +/- 0.1 mm

- Interfaces: ethernet (TCP/IP) for visual intuitive programming, input for external enabling device, input for external activation device or a safeguard control connector and hand connector

- Interaction: enabling and guiding button, selection of guiding

- Protection rating: IP30

- Weight: 18 kg

- Hand

- Parallel gripper with exchangeable fingers

- Grasping force: continuous force maximum force

- Weight: ~ 0.7

Bionic Robots: Jueying X20

The Jueying X20 is a quadruped robot, designed and built based on user feedback from real-world application scenarios. Its robust “dog” design enables it to be applied in search and rescue operations. Further, the company is developing coordinated multi-robot exploration that sees multiple robots deployed to work autonomously together on a single task.

The robot has a strong durable build to operate and perform tasks in challenging conditions. The X20 can carry a load of 85 kilograms in addition to its self-weight of 50 kilograms. They can overcome any obstacle within the height range of 18-20 cm very easily and operate on a slope of 30 degrees. The Jueying X20 also includes depth sensor cameras to perceive changes in the environment and a laser radar system for navigation purposes. It also has thermal imaging to help identify humans and other living species in general and cataclysmic environments. This can help the rescue teams in their operations by locating the heat signatures of the people under the rouble.

Jueying X20 can travel across ruins, heaps of rubble, stairwells, and other unorganized passageways in high-risk outdoors and indoor post-disaster environments. The robot dog can lessen the likelihood of subsequent mishaps thanks to its flexibility in movement in all directions and its ability to maneouver within a constrained contact area. The four-legged robot can carry out detection tasks in adverse weather conditions such as torrential downpours, dust and sand storms, cold temperatures, and hail thanks to the IP66 industrial-grade protection.

Robots from the Jueying series have found use in a variety of situations, including hazard identification, surveying hazardous terrain, inspecting high-voltage power facilities, and taking part in rescue operations. The average run time of the robot is around 4hrs, but with the autonomous charging enabled with several external power interfaces, they can be easily charged using several different types of power outlets and sent back into action right away. By integrating a wide range of application modules including a long-distance communication system, a bi-spectrum PTZ camera, gas sensing equipment, an omnidirectional camera, and a pickup, the robotic solution includes long-distance control and images transmission, heat source tracking, real-time detection of harmful gases and rescue calls, among other functions. Additionally, the X20 can also be equipped with a robot arm to do much more.

Specifications:

- Gross Weight: 50kg

- Maximum Working Load: 85kg

- Safe Working Load: 20kg (Duration of operation >2h)

- Standing Dimension: (L* W * H) 95mm*470mm*700mm

- Maximum Speed: 4.95m/s

- Average Mileage: 15km (3.6km/h, with a load of 0 kg)

- Average Runtime: 4h (with a load of 0 kg)/ 2hwith a loadof 20 kg)

- External Power Interface: 5V;12V;24V;72V(BAT)

- External Communication Interface: Ethernet;WIFI;USB;RS485;RS232

- Optional Equipment Support: dual-light pan,robotic arm, debug shelf

- 4G/5G communication

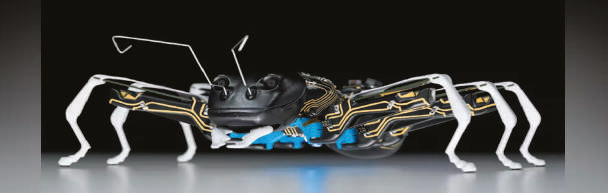

BionicANTs

Robotics company Festo, is combining Selective Laser Sintering (SLS) with 3D MID technology to create experimental swarm robotics known as BionicANTs. The bodies of the BionicANTs are made of polyamide powder, which is melted layer by layer with a laser. 3D Moulded Interconnect Devices feature spatial conductive tracks, which are visibly attached to the surface of shaped parts and act as circuit boards for electronic and mechatronic subassemblies. They make do without any cables and only require a small amount of effort to be assembled. Well-known areas of application for MID technology are automotive construction, medical and telecommunication engineering, and the aerospace industry. For the first time, Festo is now producing miniature robots with the technology, the BionicANTs serve as a method for testing the cooperative behaviour of creatures transferred to the world of technology using complex control algorithms. Like their natural role models, the BionicANTs work together under clear rules. They communicate with each other and coordinate both their actions and movements. Each ant makes its decisions autonomously, but in doing so is always subordinate to the common objective and thereby plays its part towards solving the task in hand.

In an abstract manner, this cooperative behaviour provides interesting approaches for the factory of tomorrow. Future production systems will be founded on intelligent components, which adjust themselves flexibly to different production scenarios and thus take on tasks from a higher control level. The BionicANTs demonstrate how individual units can react independently to different situations, coordinate with each other and act as an overall networked system.

By pushing and pulling together, the artificial ants move an object across a defined area. Thanks to this intelligent division of work, they are able to efficiently transport loads that a single ant could not move.

Technical data:

- Length: 135mm

- Height: 43mm

- Width: 150mm

- Weight: 105g

- Step size: 10mm

- Material, body and legs: polyamide, laser-sintered

- Material, feelers: spring steel

- 3D MID: laser structuring and gold plating by Lasermicronics

- Actuator technology, gripper: 2 trimorphic piezo-ceramic bending transducers (32.5 × 1.9 × 0.7 mm)

- Actuator technology, legs: 18 trimorphic piezo-ceramic bending transducers (47 × 6 × 0.8 mm)

- Stereo camera: Micro Air Vehicle (MAV) lab of the Delft University of Technology

- Radio module: JNtec

- Opto-electrical sensor: ADNS-2080 by Avago Technologies

- Processor: Cortex M4

- Rechargeable batteries: 380 mAh & Li-Po batteries in series, 8.4 V

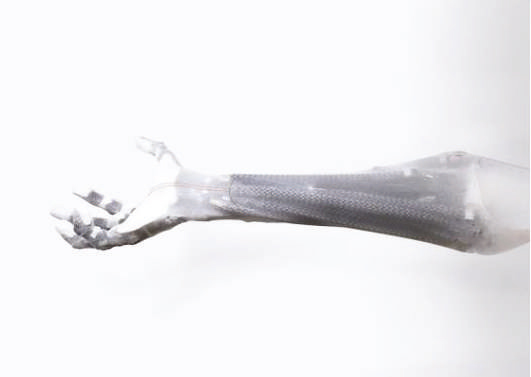

Androids: Clone Robotics

Previously humanoid-type robots have been limited to applications related to entertainment, education, and research. However, Polish startup Clone is looking to explore how super strong humanoid robots could be applied in environments unsafe for humans, which include nuclear waste facilities, wet labs, meat processors, space stations, and chemical plants. The basic materials that are to be considered while designing a robotic arm are steel, rubber, silicone, aluminum, Kevlar, and biodegradable material. The hard materials (i.e. steel and aluminum, etc.) can be utilized in building the core or the skeletal structure of the clone arms, and the softer materials (i.e. silicone and biodegradable smart materials, etc.) make up the muscle mass and the outer covering or the skin of the functional limb. As for the muscle contractions and other functioning of the limbs different pneumatic principles are incorporated.

The Polish Startup Clone has currently developed the robot arm and hand and is working on building out the torso. Their model incorporates steel and plastic as the base material and silicone for the outer coverings. Additionally, they replaced the pneumatic air muscles with the hydro version for better maneuverability and easier maintenance of the prosthetics. What makes Clone special is the use of hydraulics to mimic the

multi-articulation of human hands. Most macro-scale robots today, including those with anthropomorphic hands use DC motors for actuation. While this may work for joints with three degrees of freedom, human-level hands require actuators that are far more robust to chaos in the environment. A general-purpose robot performing many tasks in a “chaotic” environment such as a human household will need to actuate its hands with a mechanism that can adapt to strong, random forces from the environment.

A human hand offers a total of 27 degrees of freedom to perform all sorts of tasks. In order to inculcate that level of degree of freedom in the prosthetic arms, a total of 36 different muscles have been designed and included in the latest versions of clone arms. The beta Clone Hand includes 16 magnetic encoders, which measure the joint angles and velocities, and a total of 35 pressure sensors, for almost every muscle/valve. These sensors help monitor and regulate the proper functioning of the bionic arm.

Agricultural Robotics: Carbon Robotics’ Autonomous LaserWeeders

Carbon Robotics’ Autonomous LaserWeeders take the technology of machine vision from manufacturing and apply it to agriculture to create an autonomous bot-like robot capable of independently identifying and destroying weeds in crop conditions. The robot is designed for 24-hour use, contributing to increased crop yields and lower production costs. The robot has a traditional design of tractors and other agricultural equipment to meet the demands of the industrial agriculture environment.

The Autonomous LaserWeeder from Carbon Robotics works all day long with its 75 gallons of fuel capacity. It utilizes 4 hydraulic drive motors along with its GPS and visual guiding system to stay inside the lines of the field, travel through furrows, and turn around to start scanning the following row. Real-time scanning of the field, harvests, and weeds is done via 12 high-resolution camera lenses. While moving, a tough, inbuilt supercomputer utilizes machine learning to quickly identify unwanted weeds amongst the prized crops. The meristem of each weed is then subjected to a beam of high-powered laser thermal energy, which burns away the weed. The laser beam operates at 150W and has an accuracy of about 3mm. The Autonomous LaserWeeder uses 8 simultaneously running laser units to provide fast zaps on budding weeds and can eliminate over 100,000 weeds every hour.

Specifications:

- Weight: 9,500lb

- Track width: 80in

- Wheelbase: 110 in (9.2 ft)

- Vehicle speed: 5 mph

- Coverage: 15-20 acres/day

- 12 high-resolution cameras targeting weeds

- 8 independent weeding modules

- 150W CO2 lasers with 3mm accuracy

- Ready to fire every 50 milliseconds

- 74-hp Cummins diesel QSF2.8

- 4 hydraulic drive motors

- 75-gallon fuel capacity

Care Robotics: Hello Robot - Stretch Robot

Hello Robotics is a robot for researchers by researchers working on robotics projects addressing care needs.

Historically, versatile mobile manipulators have been large, heavy, and expensive. Stretch changes that.

Thanks to its pared-back design, it performs a wide range of tasks in a simple, intuitive manner. The Stretch robot is designed for autonomous operation. It comes with a calibrated robot model (URDF) that is well-aligned to 3D camera images, and open source calibration code in Python that is customizable. ROS integration simplifies the use of existing ROS

packages for autonomy, including the ROS navigation stack.

Image credit: Hello Robot

Specifications

- Interact with people using a low mass, contact-sensitive body

- Work in clutter with a compact footprint and a slender manipulator

- Go outside the lab, it only weighs 51 lb.

- Everything included: a gripper, a computer, sensors, and software

- Ready for autonomy: calibrated, Python interfaces and ROS integration, autonomy demos in Python, open source code

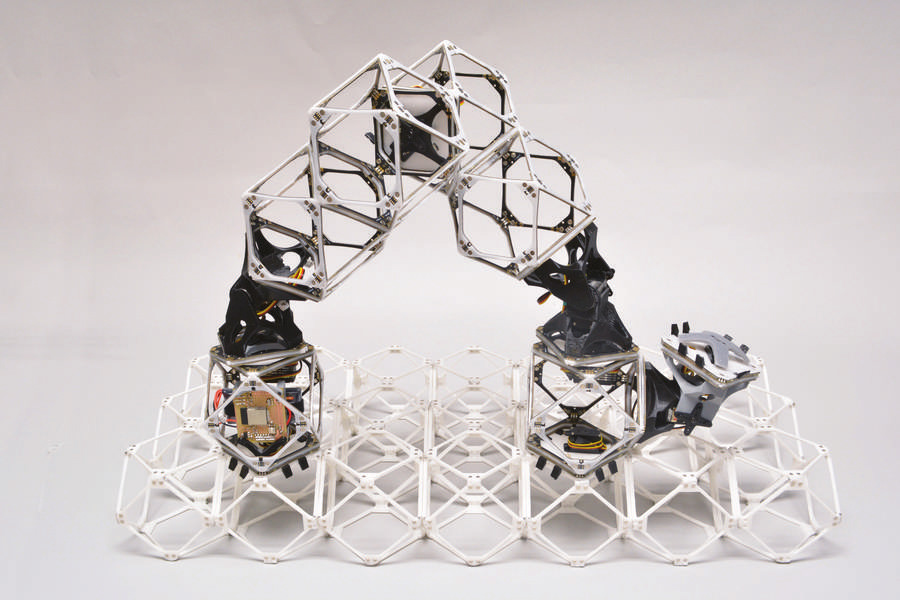

Voxels Assembler Robot

MIT researchers have made substantial progress towards developing robots that are practically and economically capable of assembling essentially anything, including objects that are much larger than themselves, such as larger robots, structures, and cars. In contrast to past iterations of assembler bots, which were linked by bundles of wires to their power supply and operating systems, the Center for Bits and Atoms (CBA) at MIT has developed a new system of voxel bots that can each transmit both power and information from one unit onto the next one. The construction of structures that can lift, move, and manipulate materials, including the voxels themselves, as well as support loads, could be made possible by this.

The new method uses voxels, which are vast, useful structures made up of a variety of tiny, identical subunits. The actual robots are made up of a series of numerous voxels linked end to end. These can migrate like inchworms to the appropriate spot, where they can grasp other voxel employing attachment sites on one end, connect it to the growing structure, and release it there. Every step of the way, these robotic machines have to make decisions as they assemble something. To assist with the following activities, it might construct a building, build a bigger or identical robot or both.

For instance, before the production of a novel car, the manufacturer may invest a year in tooling alone. The new system, however, would skip that entire procedure. Gershenfeld and his pupils have been collaborating closely with automakers, aviation firms, and NASA because of these potential efficiencies. But even the comparatively low-tech building construction sector might stand to gain. Although there is growing interest in 3-D printed homes, the printing equipment needed today is at least as large as the home being created. Alternatively, it could be more advantageous if such buildings are instead constructed by swarms of tiny robots.

Image credit: MIT

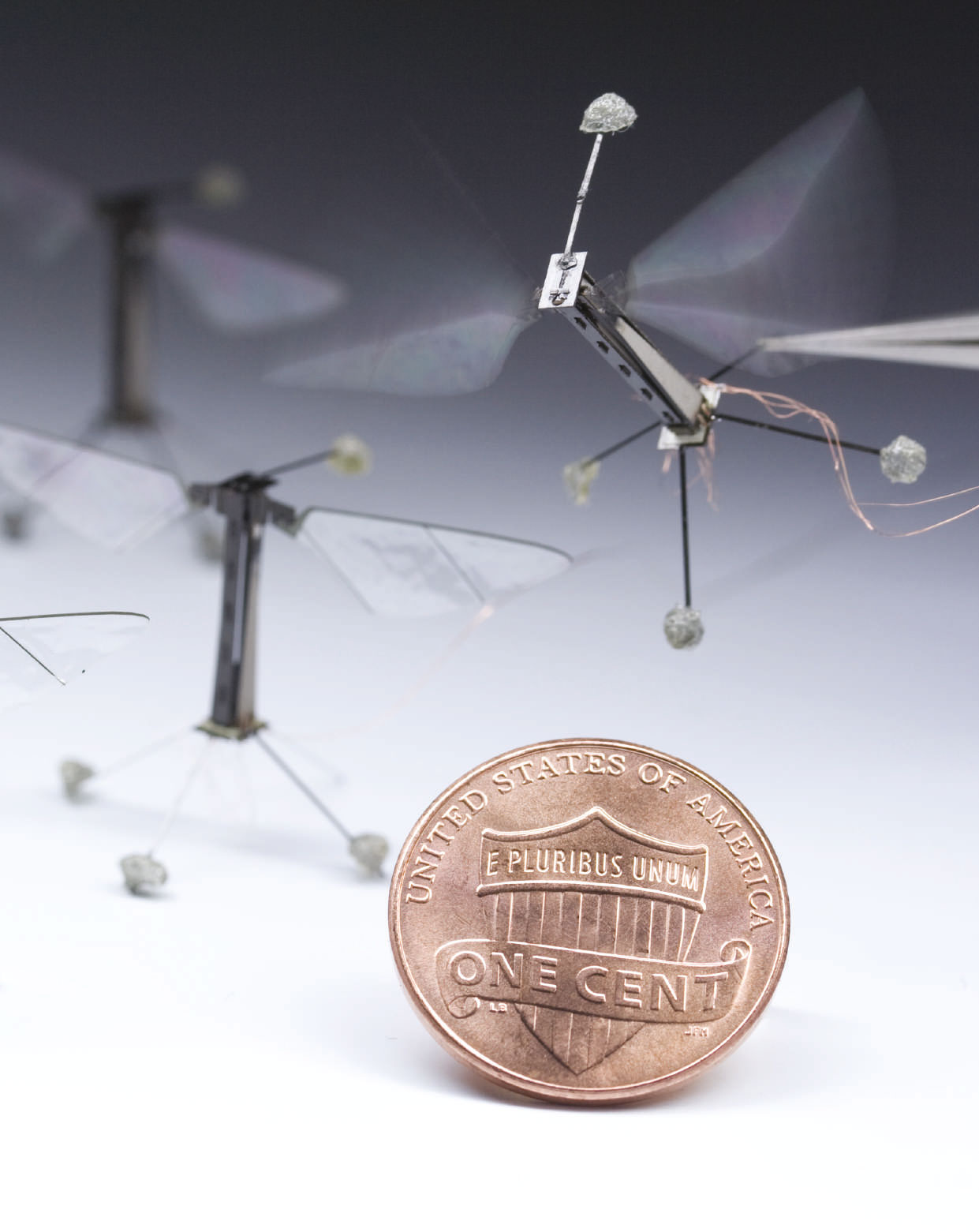

RoboBee

Inspired by the biology of a bee, researchers developed a tiny mechanical insect that achieved flight in 2012 for the first time by flapping its wafer-thin wings at the speed of approximately 120 flaps per second. Prof. Robert Wood of the Harvard Micro Robotics Lab played around with the design and the material of the tiny robot and conducted the first controlled flight test of the RoboBee. A RoboBee uses “artificial muscles” made of polymers that contract whenever a voltage is introduced to take flight. It is roughly the size of a paper clip and weighs less than one-tenth of a gram. Some RoboBee models have additional modifications that enable them to fly and “perch” on different surfaces utilizing static electricity in addition to swimming underwater. RoboBee could be used for a variety of tasks, including agricultural pollination, high-resolution meteorology, climate, and environmental monitoring, espionage, rescue operations, and surgical procedures.

RoboBee is made of different materials including ceramics, composites, polymers, and metals. Researchers at the Wyss Institute have created novel manufacturing techniques for building RoboBees, including so-called Pop-Up microelectromechanical (MEMs) techniques that have already significantly pushed the limits of current robotics engineering and design techniques. In contrast to conventional MEMS approaches or manual “nuts-and-bolts” assembling, the Pop-Up MEMS method efficiently builds many micro machines at once while also producing sophisticated, articulated mechanisms. Additionally, “Pop-Up” MEMS devices can combine piezoelectric actuators, integrated circuits, mechanical features at the micrometer scale, a range of materials, and real 3D geometries. Pop-up MEMS may make it possible to mass produce sophisticated micro-robots with sizes ranging from a few nanometers to a few centimeters, like the Wyss Institute's RoboBees, as well as unique implantable medical devices and specialized optical systems.

Image credit: Wyss Institute

Specifications:

- Wing span: 3 cm (1.2 inches)

- Weight: 80 milligrams

- Flapping speed: 120/sec

- Speed: 3.6 km/h (2.2 mph)

- Sensors: Gyroscopes, optical flow sensors, ocelli sensor (insect-inspired horizon detection sensors)

- Actuators: Piezoelectric bending bimorph cantilevers

- Degree of Freedom: 3 to 5 (Depending on the model)

Manufacturing Robotics Report

An Engineer’s guide to understanding the state of the art in hardware, materials, and the future of robotics manufacturing.

Download the eBook Request your printed copy